Category: Cloud

In the rapidly advancing world of technology, some innovations quietly revolutionize how we live, work, and interact, yet often remain hidden in the shadows of more prominent trends. This blog aims to uncover six underrated gems in technology that deserve your time and attention.

From advancements in Cloud Computing to the underappreciated IT and Data Management software, these technologies have the potential to reshape industries and enhance our digital experiences.

Let us explore how these hidden gems continue to propel us into a more innovative and interconnected future.

Some of the underrated technologies that deserve your time and attention are…

- IT Management Software

IT Management Software serves as the backbone of modern organizational efficiency, providing a comprehensive set of tools designed to streamline and optimize various aspects of information technology. This software encompasses a variety of functionalities, ranging from asset and project management to network monitoring and security protocols.

With the ability to automate routine tasks, track hardware and software assets, and facilitate seamless collaboration among IT teams, IT Management Software empowers organizations to enhance productivity, reduce downtime, and ensure the overall health and security of their IT infrastructure.

John Buccola, CTO of E78 Partners says, “Anything that falls into the category of IT management tools is often cast aside, but these are the workhorses of IT”. John lists Active Directory and access and management solutions among the noteworthy IT management tools as they possess capabilities to simplify heterogeneous environments.

- Cloud Computing

Mark Taylor, CEO of the Society for Information Management says “Cloud has been one of the most enabling technology shifts we have ever had, and because of the move to cloud, it enables us to do everything else we are doing now. But it has gone completely to the background because AI has sucked up all the air.”

While Cloud Computing has been instrumental in reshaping the IT landscape, the emergence of newer technologies has somewhat shifted the spotlight away from its promising capabilities.

Despite being instrumental to modern digital infrastructure, Cloud Computing may appear overshadowed by the excitement surrounding certain emerging trends. However, it is crucial to recognize that Cloud Computing remains the backbone of many technological advancements, offering unparalleled scalability, accessibility, and cost-effectiveness.

Even as newer technologies grab the attention of many, the enduring influence of Cloud Computing continues to support the seamless functioning of contemporary digital landscapes.

Our team can assist your organization in making a smooth and successful transition to the cloud. Book a free consultation with us today to start your cloud journey.

- Cloud Based ERPs

While Cloud-based ERPs have been pivotal in modernizing business operations, the advent of innovative technologies has occasionally shifted the focus away from their significance.

Amidst the buzz surrounding emerging trends, Cloud-based ERPs might seem momentarily overshadowed. However, it is crucial to recognize that these systems continue to be the foundation of efficient data management, seamless collaboration, and streamlined processes for businesses.

As newer technologies make waves, the enduring impact of Cloud-based ERPs continues, ensuring they remain a foundational element in the ever-evolving landscape of enterprise resource planning.

Jeff Stovall, CIO of Abt Associates says “Cloud-based enterprise resource planning (ERP) is another behind-the-scenes technology that often gets overlooked in favour of newer, glossier tech. He further adds that cloud-based ERPs are rarely credited for how critical they are for digital transformation.”

- Cloud Migration Tools

Cloud Migration Tools, while remaining crucial to seamless transitions, may be somewhat overshadowed by the emergence of newer and trendier technologies.

However, it is crucial to acknowledge that Cloud Migration Tools continue to play a significant role in facilitating smooth transitions from on-premises environments to cloud platforms.

Their importance may not always be at the forefront of discussions, but these tools remain essential for organizations navigating the complex process of migrating their digital assets to the cloud, ensuring scalability, flexibility, and optimal performance.

For an in-depth understanding of Cloud Migration strategies, please download our exclusive whitepaper resource.

Yugal Joshi, a partner at research and advisory firm Everest Group, speaks about the relevance of Cloud Migration Tools and says, “CIOs sometimes think they do not need this tool because moving to cloud has become so pervasive. They think migration is easy, but it is complex, and the choices of cloud vendors and offerings have increased, [adding to that complexity],”

- IT Plumbing and Back Office Components

The foundational components, referred to as “Basic IT plumbing” and back-office mainstays may occasionally be overlooked due to the emergence of newer and more glamorous technologies.

These back-office mainstays include the fundamental elements of IT infrastructure management, networking, and system administration. Although they may not always grab the attention, these elements remain the unsung heroes, providing the necessary groundwork for the seamless functioning of more innovative technologies and ensuring the reliability and stability of the entire IT ecosystem.

- Data Management Software

Amidst the buzz surrounding artificial intelligence, machine learning, and other cutting-edge innovations, effective Data Management serves as the foundation that determines the success and reliability of these advancements.

Data Management assists in organizing, storing, and safeguarding vast volumes of information, ensuring its accuracy, accessibility, and security. As organizations increasingly leverage data as a strategic asset, the need for proper governance and management becomes necessary.

Trending technologies often rely heavily on high-quality, well-managed data for optimal performance. Artificial intelligence algorithms require clean and relevant data for training models. Without a robust Data Management framework, these technologies may falter or fail to realize their full potential.

In conclusion, as we journey through the technological landscape, it becomes evident that innovation goes beyond the latest trends. The six underhyped technologies explored in this blog are instrumental in quietly shaping the future of IT.

By embracing these underappreciated innovations, we open ourselves to a world of possibilities, pushing the boundaries of what is known and setting the stage for a more inclusive and dynamic technological landscape. It is time to give credit where it is due and embrace a new era of recognition for these deserving technologies.

Explore the Future of Technology with us. Dive into our wealth of knowledge through insightful whitepapers and engaging blogs.

Stay at the forefront of IT innovations and discover how our resources can empower your business. Visit us now to access valuable industry insights and expertise. Elevate your technology journey with Inovar Tech today!

References:

- 6 most underhyped technologies in IT — plus one that’s not dead yet. CIO

In the rapidly evolving world of technology, the infrastructure that supports our digital ecosystem is constantly changing. Hence, it becomes crucial to understand the trends that are shaping the future of cloud, data centre, and edge infrastructure. These trends not only impact businesses but also influence the way we live and work in the digital age.

To know more about the Technology Trends you should look for this year, please refer to our blog.

The future of cloud, data centre, and edge infrastructure is a dynamic and exciting space, driven by innovation, adaptation, and unprecedented growth. To navigate this evolving terrain effectively, it is essential to gain insight into the trends that are set to shape the technological landscape ahead.

Gartner, Inc. emphasized four trends that are set to influence cloud, data centre, and edge infrastructure in 2023. These trends are coming into focus as infrastructure and operations (I&O) teams adapt to facilitate modern technologies and work methodologies in a year characterized by economic uncertainty.

Let us explore four significant trends that are set to reshape the landscape this year and beyond.

Trend 1: Cloud Teams Will Optimize and Refactor Cloud Infrastructure

According to Gartner, the projection is that by 2027, 65% of application workloads will be in an optimal or cloud-ready state, an increase from the 45% reported in 2022.

While the use of the public cloud has become nearly ubiquitous, many deployments lack structure and are executed poorly. In the current year, infrastructure, and operations (I&O) teams have an opportunity to reassess poorly designed cloud infrastructure, intending to enhance its efficiency, resilience, and cost-effectiveness.

The primary focus of revamping cloud infrastructure should revolve around cost optimization by eliminating underutilized resources, prioritizing business resilience over service-level redundancy, utilizing cloud infrastructure as a means to mitigate supply chain disruptions, and modernizing the overall infrastructure.

For detailed insights on Cloud Computing and Migration Strategies, please download this free whitepaper resource.

Trend 2: New Application Architectures Will Demand New Kinds of Infrastructure

According to Gartner’s projections, it is anticipated that by 2026, approximately 15% of on-premises production workloads will be containerized, marking a substantial increase from the less than 5% reported in 2022.

Infrastructure and Operations (I&O) teams face an ongoing challenge in adapting to evolving and expanding demands, driven by the need for new types of infrastructure. These include edge infrastructure for data-intensive use cases, non-x86 architectures tailored for specialized workloads, serverless edge architectures, and the integration of 5G mobile services.

I&O professionals must approach alternative options with a discerning eye, emphasizing their capacity to effectively manage, integrate, and evolve within the constraints of limited time, talent, and resources.

As Paul Delory, VP Analyst at Gartner, points out, “Don’t revert to traditional methods or solutions solely based on past success. Challenging periods are opportunities to innovate and discover fresh solutions that can meet the evolving demands of businesses.”

For a detailed understanding of Cloud Architecture, please check out our informative blog.

Trend 3: Data Center Teams Will Adopt Cloud Principles On-Premises

As per Gartner’s forecasts, by the year 2027, approximately 35% of data centre infrastructure will be administered through a cloud-based control plane, marking a significant increase from the less than 10% reported in 2022.

Data centres are experiencing a size reduction and are shifting towards platform-based co-location providers. This, when combined with emerging as-a-service models for physical infrastructure, has the potential to introduce cloud-like service-oriented approaches and economic frameworks to on-premises infrastructure.

In the current year, Infrastructure and Operations (I&O) professionals should concentrate on three key strategies namely constructing cloud-native infrastructure within the data centre, transferring workloads from self-owned facilities to co-location facilities or edge locations, and embracing as-a-service models for physical infrastructure. These actions are pivotal for staying ahead in the rapidly evolving IT landscape.

Trend 4: Successful Organizations Will Make Skills Growth Their Highest Priority

According to Gartner’s projections, it is anticipated that by 2027, approximately 60% of data centre infrastructure teams will possess the relevant skills in automation and cloud, signifying a substantial increase from the 30% reported in 2022.

The main hindrance to infrastructure modernization initiatives continues to be the scarcity of skilled personnel. Many organizations encounter difficulties in hiring external talent to bridge these skill gaps. To thrive in this environment, IT organizations must prioritize the organic growth of skills.

In the current year, Infrastructure and Operations (I&O) leaders must elevate skills development to the top of their agenda. They should actively encourage I&O professionals to assume new roles, such as site reliability engineers or serve as subject matter expert consultants for developer teams and business units.

Investing in skills development is crucial for staying competitive and meeting the evolving demands of the IT landscape.

The acceleration of technology and the increasing demands of a digital society have necessitated a shift in our approach to infrastructure. The challenges of 2023 are, in fact, opportunities for innovation, adaptation, and growth. In a world that is becoming more interconnected and data-driven every day, staying ahead of the curve is essential.

With Gartner’s projections in mind and the ever-increasing pace of change, it is crucial to emphasize the importance of continuous skills development.

As IT organizations and professionals navigate these trends, upskilling and embracing new roles will be pivotal to success.

In 2023, the future of digital infrastructure is calling us to adapt, innovate, and lead with resilience. The journey may be challenging, but the rewards are transformative.

As we navigate this exciting world of cloud, data centre, and edge infrastructure, we must remember that knowledge and proactive adaptation will be our guiding force.

We must stay informed, be agile, and keep a watchful eye on the horizon, for the world of technology never stops evolving.

The trends we have explored are just the beginning, and there is no doubt that the road ahead will be filled with exciting opportunities and uncharted territories. We must collaboratively embrace the future with confidence, and it will be our greatest ally in the years to come.

If you would like to understand our Cloud Computing and Cloud Migration approaches and strategies, please visit our website or book a free consultation with our Cloud Computing experts today.

References:

Gartner Says Four Trends Are Shaping the Future of Cloud, Data Center, and Edge Infrastructure. (Gartner)

In the rapidly evolving world of Cloud Computing, two terms that often appear similar but are largely different from one another, are Multi-Cloud, and multiple-cloud.

Today, we want to help you understand these terms better, what do they mean individually? What benefits do they bring to the table and, how are they different?

For an in-depth understanding of Cloud Migration strategies, best practices, and approaches, download our exclusive whitepaper resource.

As more organizations are moving to the cloud and leveraging cloud computing solutions for their IT infrastructure, understanding these differences becomes crucial.

Often confused with one another, Multi-Cloud and Multiple Cloud represent distinct approaches to cloud deployment and management.

In this blog post, we will delve into the nuances of these terms and explore how each approach impacts your organization.

THE MULTI CLOUD- A STRATEGIC APPROACH

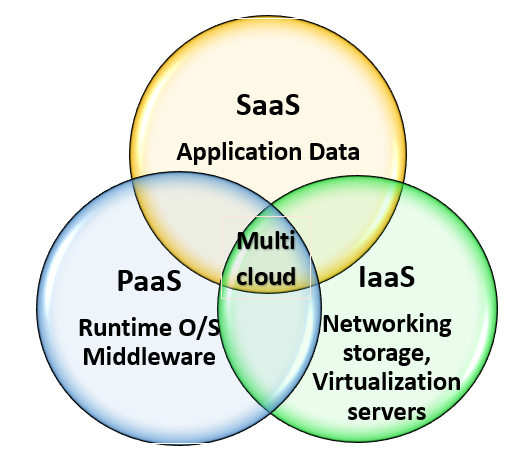

Multi-cloud is a cloud computing strategy that uses services and resources from multiple cloud service providers, such as Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform (GCP) simultaneously.

Instead of relying on a single cloud provider, organizations using a multi-cloud approach distribute their workloads and applications across different cloud platforms based on the specific needs and strengths of every Cloud Computing Service provider.

The key idea is to avoid vendor lock-in and leverage the strengths of various cloud platforms for specific tasks or workloads.

HOW IS MULTI-CLOUD USEFUL?

- Reduced Vendor Lock-In

Multi-cloud strategies try to avoid dependency on a single cloud provider. By using several cloud platforms, organizations can prevent being restricted to one vendor’s technologies and pricing structures. - Optimized Performance

Different cloud providers have their own set of strengths and capabilities. By strategically assigning workloads to the most suitable cloud platform organizations can improve performance and enhance cost efficiency. For example, they may use AWS for compute-intensive tasks and GCP for machine learning. - Enhanced Reliability

Multi-cloud can provide redundancy and failover options. If one cloud provider experiences downtime or issues, the organization can easily shift its operations to another provider. - Compliance and Data Sovereignty

Organizations can choose specific cloud providers based on compliance and data sovereignty requirements. For instance, a company subject to strict data regulations in different regions may opt for cloud providers with data centres in those regions. - Cost Optimization

Multi-cloud strategies can be used to take advantage of cost variations between cloud providers. It involves cost-conscious decision-making, where workloads are allocated to the provider offering the most cost-effective solutions. - Disaster Recovery

Multi-cloud can help with disaster recovery and business continuity. Data and applications can be duplicated across multiple cloud providers to ensure high availability and data resilience. - Flexibility and Innovation

By having access to various cloud ecosystems, organizations can leverage the latest innovations and technologies offered by different providers. This enables them to stay at the forefront of technological advancements.

Implementing a multi-cloud strategy requires careful planning, management, and monitoring to ensure the various cloud environments work seamlessly together.

To understand if Cloud Computing is worth your investment, check out our informational video by clicking on this link.

UNDERSTANDING THE MULTIPLE CLOUD APPROACH

“Multiple cloud” is a less common term, but it could be used in a general sense to describe an environment where an organization uses multiple instances or deployments of a single cloud provider’s services.

THE “MULTIPLE CLOUD” SCENARIO CAN RESULT IN

- Lack of Coordination

Users or departments within an organization may independently adopt cloud services without a clear strategic plan for integration or coordination. It can result in a scattered approach where various cloud services are used without considering how they interact or share data. - Data Silos

Since different cloud services or platforms are not integrated or coordinated, data may become fragmented and isolated within these services, making it challenging to share or analyze data across the various cloud environments. - Resource Inefficiency

Using multiple cloud services without a strategic plan can lead to resource inefficiency, as users may not take advantage of cost-saving opportunities or optimized configurations. - Increased Complexity

Managing and securing several disconnected cloud services can be more complex and challenging compared to a well-coordinated multi-cloud approach. - Security and Compliance Risks

When various cloud services are utilized without a comprehensive strategy, it can introduce security and compliance risks, as each service may have different security and compliance requirements.

Let us now help you clearly understand the distinguishing factors between Multi-Cloud and Multiple- Cloud:

MULTI-CLOUD VS. MULTIPLE CLOUD

- Multi-Cloud maintains a single copy of the data that can be accessible from all cloud environments simultaneously. Multiple Cloud gives way to duplicate data, where each copy of the data can be accessed from a single cloud.

- Multi-Cloud avoids vendor lock-in. Multiple Clouds presents users with complex integration and data management methods.

- In Multi-Cloud, organizations can select the right cloud computing services and resources. In Multiple Clouds, organizations must work with multiple IPs and volumes of data.

- In Multi-Cloud, organizations can receive accurate cost savings that in turn help them gain cost predictions. In Multiple Clouds, egress charges are unpredictable, and organizations are also burdened with data transfer expenses.

- In Multi-Cloud, organizations can experience a data-driven digital transformation of their IT infrastructure. Multiple Cloud opens up organizations to issues about synchronization.

Remember, the distinction between multi-cloud and multiple cloud is rather a strategic choice that can significantly impact an organization’s cloud computing experience.

Multi-cloud is a strategic approach, where careful planning, integration, and vendor diversification lead to optimized performance, cost efficiency, and risk reduction.

On the other hand, multiple cloud often uses a decentralized adoption that may create complexities, inefficiencies, and data silos. Businesses must recognize the significant differences between these two approaches and carefully decide which one aligns best with their goals, resources, and long-term cloud strategy.

Ultimately, the choice between multi-cloud and multiple cloud depends on how an organization wants to harness the limitless potential of the Cloud.

If you would like to understand our Cloud Computing and Cloud Migration approaches, please visit our website for insightful case studies and whitepapers.

References:

Is my data architecture multi-cloud or multiple cloud? (CloudTweaks)

Businesses today are competing to make the most out of the $3 trillion opportunity that Cloud-based platforms present in EBITDA.

A crucial factor to consider when moving to the cloud is the resilience of applications, as the maximum of the cloud value lies in running business-critical operations.

Cloud provides a stable on-premises environment with faster recovery time, more compliance and upgraded tools that provide optimal resiliency capabilities. Companies must design, architect, and adopt appropriate resiliency patterns to meet their specific requirements and to leverage these cloud capabilities.

Cloud resiliency patterns are targeted towards reducing technical debt, automating operations, and equipping applications with cloud capabilities.

Understanding Cloud Resiliency

Cloud resiliency is the capability of cloud infrastructure to maintain high availability, reliability, and fault tolerance even during unexpected disruptions such as hardware failures, network issues, or natural disasters. It is the backbone of an organization’s digital operations, ensuring that critical services and data remain accessible at all times.

Amid rapidly evolving customer expectations and regulatory demands, leaders and industry professionals have expressed genuine concerns over ensuring that their critical applications are always functioning and available.

The reason that few companies have moved their critical applications to the cloud can revolve around these concerns. A survey of leaders and technology experts revealed that only 10% successfully moved their critical and mission-based processes to the cloud.

Want to move to the cloud? Download our exclusive Cloud Migration White paper for detailed insights.

Cloud-related issues can be tackled by conducting two crucial analyses:

The first analysis can help determine the financial and reputational impact on the business. Organizations often tend to underestimate or overestimate these costs, which leads to inconsistencies in the decision-making process.

The second analysis helps businesses determine the on-premises operations-related costs compared to the cloud-related expenses. Cloud-based solutions are often more cost-effective and flexible, which allows them to accommodate usage surges and their pay-as-you-go model.

To derive cost benefits, leaders must ensure that their applications are designed well, their infrastructure is well developed and configured, and the lifecycle of the existing hardware is also considered.

Organizations can avoid expensive capital investments and hardware-related costs by migrating critical operations into the cloud, especially before any major changes in their hardware structures. Strong Fin Ops capabilities are required to acquire and build on these insights.

Why Is Cloud Resiliency Important?

- Business Continuity:

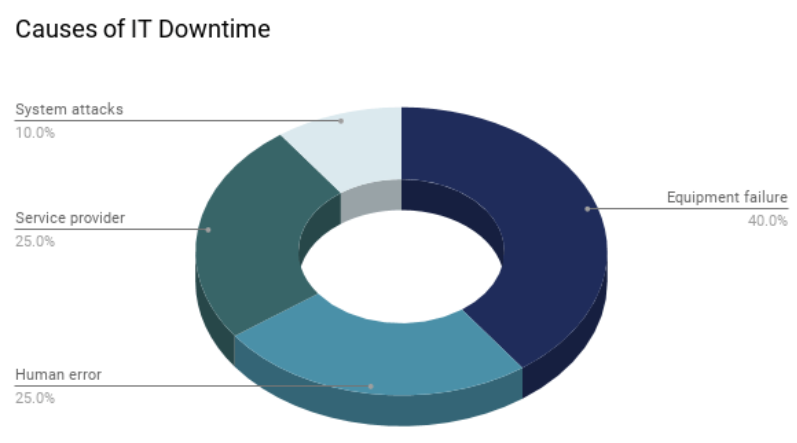

Cloud resiliency is fundamental for business continuity. Downtime can lead to significant financial losses, damage to reputation, and customer dissatisfaction. Cloud services minimize downtime, helping businesses stay operational in all circumstances.

According to a survey conducted by Gartner, organizations that rely on cloud technology for over 75% of their backup and recovery requirements can expect to reduce their overall cost of business continuity and disaster recovery by 60%. ( 3)

- Scalability:

The cloud allows businesses to scale their resources up or down as needed. A resilient cloud infrastructure ensures that this scaling happens seamlessly, enabling organizations to meet sudden increases in demand without service interruptions. According to a Forbes survey, since 2020, 83% of companies have been managing their workloads in the cloud. (2)

- Disaster Recovery:

In the event of a disaster or a hardware failure, cloud resiliency plays a crucial role in disaster recovery. Data replication, backup, and redundancy strategies implemented by cloud providers help safeguard data and applications, allowing for quick recovery. A survey by the Disaster Recovery Journal found that 46% of organizations using the cloud for disaster recovery reported faster recovery times. (4 )

- Security:

Security is a significant aspect of resiliency. Cloud providers invest heavily in cybersecurity measures, protecting against cyber threats and data breaches. It enhances the overall resiliency of cloud-based systems.

- Cost-Efficiency:

Building a resilient on-premises infrastructure can be expensive and complicated. In contrast, cloud resiliency is usually more cost-effective. Cloud providers manage the complexities of redundancy, failover, and disaster recovery, enabling businesses to concentrate on their primary operations without the stress of extensive infrastructure management.

- Competitive Advantage:

Companies that can provide uninterrupted services have a competitive advantage. Clients value reliability and are more likely to choose firms that can guarantee their services will be available when needed.

Forbes reported that 74% of Tech Chief Financial Officers (CFOs) believe cloud computing has the most measurable impact on their business. (5)

- Adaptability:

Resilient Cloud infrastructures can adapt to changing business needs. They enable organizations to expand their digital footprint and implement new technologies without disruptions.

How Can Cloud Providers Help With Resiliency?

- Redundancy

Cloud providers deploy redundant hardware and network frameworks across multiple data centres. When one data centre experiences an issue, traffic can be accordingly rerouted to another, minimizing disruptions.

- Data Replication

Cloud providers often replicate data across multiple geographic regions. It ensures data availability even if a specific area faces a disaster or outage.

- Load Balancing

Load balancing distributes incoming traffic across multiple servers, preventing overloads on any single server and ensuring high availability and performance.

- Automatic Failover

Cloud services are architected with automatic failover mechanisms. If a server or data centre experiences a failure, services are automatically shifted to a healthy one, reducing downtime.

- Monitoring and Alerts

Cloud providers regularly monitor their infrastructure and services. They use advanced monitoring tools and artificial intelligence to detect issues and send alerts to administrators.

Critical Steps To Take Towards Cloud Resiliency

To launch cloud resiliency, companies must be aware of potential risks and equip themselves with the required resources to mitigate them.

Here are five critical steps to adopt cloud resiliency:

- The first step is prioritizing urgent business operations, identifying all related applications, and aligning the organization’s strategic goals with the impact analysis results.

- The second step is to identify the pain points and technical hindrances organizations encounter during cloud migration. In addition to this, companies must also define resiliency patterns to meet specific business needs.

- Companies must design a roadmap to implement a trial lighthouse to accelerate the learning process and add value.

- Organizations must also identify gaps in processes (such as incident, problem, and change management) and talent (including unfilled engineering roles) in conjunction with the architecture.

- The last step should be setting achievable goals for making the cloud resilient and ensuring that it aligns with business goals, duration, and costs.

Cloud resiliency is vital for ensuring the availability, reliability, and security of digital services in an increasingly interconnected world. It not only safeguards businesses against disruptions but also enables them to thrive, innovate, and remain competitive in a rapidly evolving digital landscape.

Consequently, it is a key consideration for organizations when selecting cloud providers and designing their cloud-based solutions. Businesses that harness the power of the cloud’s resiliency are better prepared to deal with disruptions and continue delivering their services without hindrances.

For more insights on Cloud Migration and Top Trends in the Cloud, please refer to our informational video on Top Cloud Native Trends to Watch in 2023.

References:

- 83% Of Enterprise Workloads Will Be In The Cloud By 2020. (Forbes)

- Source: Gartner

- Source: Disaster Recovery Journal

- Roundup Of Cloud Computing Forecasts, 2017. (Forbes)

Serverless Computing

Serverless computing is a cloud computing architecture model in which the cloud service provider allocates hardware resources on a need basis. The cloud service providers own the physical server installation setup, management, and maintenance. The service provider allocates server resources only during execution and is free during idle time or when the application is not in use. The end-users or the customers pay for what they use to these service providers. The end-users using these server services need not worry about the backend infrastructure capacity or maintenance.

Serverless Computing offerings

There are several reasons to adopt Serverless computing over the conventional cloud-based or server-centric data centers or cloud computing. Serverless computing architecture is flexible and economical. It offers greater scalability and a short turn-around time to release. Organizations save cost and time in planning infrastructure space, purchasing hardware, installation, and maintenance of servers.

Advantages of Serverless Computing

No server management

Since the vendor manages the physical server’s planning, installation, organizations, or developers need not worry about the physical server’s maintenance or DevOps. The labor and logistic costs are saved, and the organizations can re-invest them in opportunities that are productive or areas that give returns.

Scalability

Serverless computing can scale automatically as per demand or usage increases. If a function needs to be run in multiple instances, the service provider’s servers will start, run, and end them as they are needed, using the concept of containers.

Pay-as-you-go

Code only runs when backend functions are needed by the serverless application, and the code automatically scales up as needed. There are server services that can track finer, and smaller details or timings of the server used and provide better and more accurate expenses. This results in heavy savings compared to the conventional server system where organizations must bear operational expenses of servers irrespective of their usage.

Performance for continuously running code

If an application is using code regularly, then the performance will be the same on a serverless environment compared to a traditional server environment, irrespective of the number of instances being run in parallel. If the code is continuously running it might need a short cycle to start called the “warm start”.

Reduced latency

Since the code and application are hosted on an origin server, the code can be run from anywhere and very close to the origin server, thus decreasing the latency.

Faster deployment and easy updates

In a serverless environment, since the code is maintained in the cloud, developers can quickly and easily update their code to develop and release newer versions of the application. Developers can choose to upload the code either one function at a time or all at once because the application is typically a collection of functions provisioned by the service provider.

This helps in applying and releasing the patches or updates with bug fixes or new features quite fast.

Disadvantages of serverless computing

Security concerns

“Multitenancy” – means sharing the same infrastructure with multiple and independent end-users like sharing HW resources in serverless computing. So, in a serverless environment, during the sharing of resources across different end-users, if the multi-tenant servers are not configured properly, it can result in potential security breaches and data theft or misuse. But if configured and maintained correctly, through proper vetting it can reap benefits.

Costly for long-running processes

If the applications are designed to run for long durations, then sometimes the cost using serverless compute, may overwhelm compared to using traditional server services. So, the benefits of serverless computing would be trimmed to applications meant to run for short durations.

Challenges in testing and debugging

It is challenging to simulate a serverless environment to experience how the code will execute once deployed. And with no access to the backend processes or the application is broken into smaller functions, sometimes developers may find it hard to test or debug the issues.

Performance degrades for irregular runs

There may be cases where the serverless code may not be constantly running in which case, to boot up and start the code, might take considerable time, and impact the performance. This is called a “cold start”.

Single vendor dependency

Cloud service provider selection should be depending on the more open and generic APIs that the features and workflows offer, giving us an option for easy switching to other vendors if need be. Sticking to one serverless cloud service provider can often be risky for unforeseen circumstances.

When do you need a serverless architecture?

Serverless architecture is most preferred for developers if:

1. They want to reduce their go-to-market time.

2. Build lightweight and flexible applications.

3. Apps need to be scalable or updated frequently and quickly.

4. Apps have inconsistent usage, peak periods, or traffic.

5. App functions need to be closer to the end-user to reduce latency.

When should you avoid using a serverless architecture?

Large applications running for longer durations or having consistent and predictable workloads may better be benefitted from a traditional server compared to a serverless architecture both in terms of cost and architecture.

Inovar implements Serverless Cloud Computing

Achieving business goals with a collaborative approach and tailoring the use of apt cloud computing technology infrastructure is very important.

We provided many of our customers with better infrastructure, ensured effortless and uninterrupted operations. We delivered all the features that would help find information for the SMEs and enable them with the right guidance. For many of our clients, we have digitalized the entire process with end-to-end workflow applications. Inovar helped a few clients to deploy a hybrid cloud solution for data security and access control and boosted processes with serverless engines.

We ensured that the client’s services are met and addressed to the business needs with improved user experience and substantial cost savings. If you are looking for services with the best-in-class infrastructure and leaders who ensure strategy lives for ages, reach out to us.

Take full advantage of the cloud computing model by containerizing the applications and building microservices for agility, flexibility, reproducibility & transparency.

Build new applications and modernize the existing ones in accordance with the principles of the cloud that are optimized for the utilization of product development & faster service delivery to your customers.

Benefits of Cloud-Native Application Platform

1. Faster Release

The faster an organization can conceive; build & ship software determines their market stand against the competition.

User demand for new functions grows faster than your development processes can meet. You need a platform, methods, application services, and tools that can keep up without forcing you to leave behind the existing apps that your customers depend on. Cloud development methods – DevOps and agile – also guide cloud projects forward at a fast pace and resolve bottlenecks in a short time.

2. Easy Management

Serverless platforms like Azure function & AWS Lambda take the hassle out of managing infrastructure so that you don’t worry about configuring the networks, allocating storage, or provisioning cloud instances, etc. as an example.

3. Cost Savings

Standardization of infrastructure & tooling across many cloud platforms helps reduce costs for organizations by containerizing the applications independent of the platform. An example of such is the open-source Kubernetes platform which is standard for managing resources on the cloud.

4. System Reliability

By using approaches like Microservices & Kubernetes you can build applications that are fault-tolerant with self-healing built-in. These approaches prevent an entire application from being affected or taken down when a technical failure hits thus allowing you to isolate and work on the impacted area alone.

5. Scalability

Who doesn’t want the business to grow? With a growing business comes a growing scale of application use. Cloud-native development allows you to be worry-free of the growing scalability by optimizing the applications scale automatically with you paying only for the services used.

Are you looking for some consultation on how you can achieve the above benefits? Email your queries or get in touch with one of our representatives & we’d love to get back to you.

About Inovar Consulting

Inovar delivers state-of-the-art smart solutions through its Consulting evangelists who help businesses identify and integrate emerging technologies and software initiatives that create a trusted customer experience and camaraderie.

SaaS or Software as a Service is making a buzz in almost every industry, and logistics is no exception. Businesses across the globe prefer SaaS-based logistics software instead of investing in developing on-premises software that might take several months or even a year to build. To understand why this shift is happening- let’s first understand what are the problems the logistics industry is facing? And how SaaS-based applications can be a perfect solution for them.

SaaS is a mechanism of transferring software to everyone (individual or business) over the internet. In this nature of software licensing and release model, the license of the software can be availed periodically. The software is centrally introduced in the cloud, and the individual, availing SaaS access through any compatible browser.

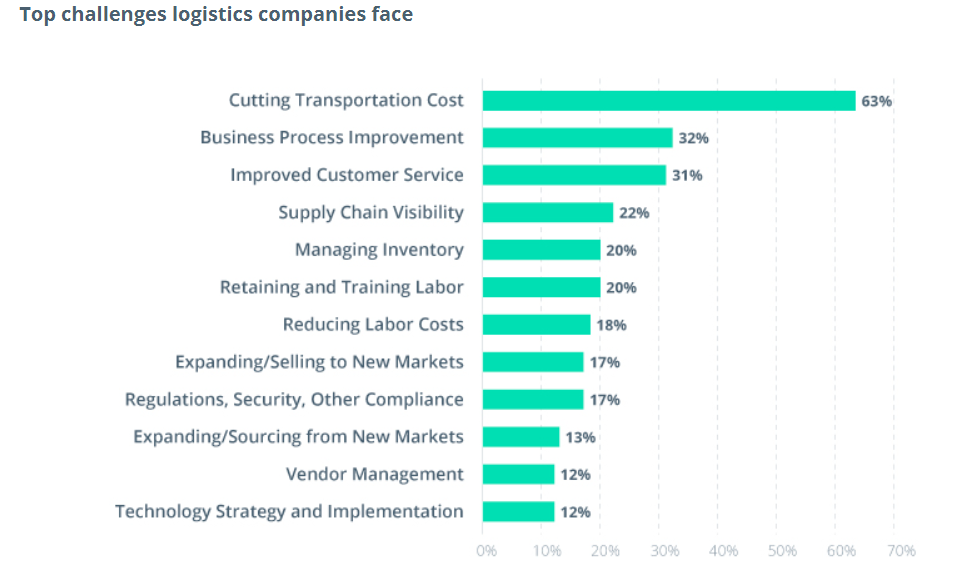

Present industry challenges:

Transportation and logistics industries are constantly struggling with challenges- like complex competition, changing customer expectations, and leveraging digitization to succeed. Lack of ‘digital culture and training is thus the biggest challenge for these companies. Attracting the right skills is one issue, but developing the right strategy is even more crucial.

Besides, an increasingly competitive environment is another dominant factor in the mix. Some of the sector’s customers are springing logistics services of their own, and different competitors to the business are shaping out more profitable aspects of the value chain by exploiting digital technology or brand-new ‘sharing’ business models. They don’t have asset-heavy balance sheets or cumbersome existing arrangements weighing them down.

There is no other industry where so many experts ascribe vast influence on data and analytics in the next five years than transportation and logistics. There are vast possibilities to enhance performance and serve customers better, and LSPs who are part of a digitally integrated value chain can benefit from significantly increased forecasting to scale up capacity or down and plan routes. Adding machine learning and artificial intelligence techniques to data analytics can deliver truly dynamic routing.

Business solutions

One of the most promising ways for transportation companies to improve their workflows and find new ideas for business growth is using a custom SaaS system. These systems guarantee more agile data processing and greater automation of operations than out-of-the-box solutions. They also allow companies to build only the features they need.

From streamlining processes to increasing visibility in the supply chain, their pertinence has grown in quality. According to Statista, 87% of logistics and supply chain firms are tapping into cloud-based solutions to facilitate transportation management and gain an edge in an ever-changing marketplace.

Businesses can optimize SaaS solutions to automate the logistics workflow and free employees from performing routine tasks connected with paperwork.

Logistics is a sphere of multitasking and multiprocessing. Robust SaaS solutions are essential for tracking details and conducting analysis, which helps businesses detect issues and optimize their work. They are designed to simplify and manage complicated logistics problems by taking an integrated, end-to-end approach. The functionality enables optimal route planning, reductions in fuel costs, and significant improvements in asset utilization

.

Logistics companies gain real-time visibility from SaaS solutions into logistics processes and data, assisting businesses to keep their operations under command. SaaS logistics solutions support companies to reach a more predictable supply chain, while companies can associate in the cloud to cooperate efficiently.

SaaS solutions empower operators to access high-level route planning abilities on demand, encouraging them to avoid the high capital cost investment and the in-house IT staffing required for superior systems integration. We can directly scale them up or down according to business needs without infrastructure changes as they are delivered as a service.

Inovar optimized SaaS solution for logistics

Inovar joined hands with fleet operators to manage their logistics operations and apply technology for making their workflow automated. With an AI-powered SaaS solution, we gave fleet operators ways to predict demand, optimize vehicle usage, and give them real-time tracking of their vehicles from both fleet operators and consumers.

Our first aim was to bring all stakeholders Markets, Warehouses, Traders, Ports, and Truckers under a single virtual platform and allow them to trade on-demand within an open marketplace.

Inovar brought Microsoft Azure IoT and machine learning services within a customized application that could build a connected platform for fleet operators. The solution could not only gather data efficiently but generate intelligence as it was used. Another remarkable feature was analytics and event hubs that captured data used in a Databricks platform to generate insights. Using these insights, system booking, scheduling, predictive maintenance cycles became 70% easier than before.

Finally, the system went live with 1000+ vehicles, and the logistics client started to reap ROI from multiple avenues. Within 3 months, the efficiency of their operations improved by 30% with better security, high scalability, and faster availability from the end-user.

Summing up, we can say that cloud logistics automation is beneficial to all participants in the supply chain. It allows you to easily solve complex problems, so its popularity will only grow every year. As a logistics software development partner, we solve many standard and custom needs of the transportation industry from Business as well as Technology perspectives. After many years spent in the logistics domain, our dedicated development team learned specific details and gained experience that can be used to effectively help you with any of your logistics software that you need to develop.

InovarTech is ready to improve your automation experience. With our brilliant forms and streamlined workflows, you will be fit to tackle process technologies and know-how to adapt them to your institution. Reach out to us today to get started. You can take comfort because we know process automation and how to implement them effectively. Let us deliver high-volume processes to your doorstep.

The pandemic has changed how people around the world live, work, and engage with each other. In late 2019 when covid-19 struck the world, affecting millions of people all over the planet — the healthcare industry was hit the most. The pressing need for resources, information, cost optimization, and supply chain inefficiencies in the sector calls for a major technology overhaul. Therefore, embracing new-age digital technologies is key for healthcare providers in addressing the immediate concerns and driving long-term goals.

Automation makes a revolutionary process change in most of the industries, that has the same or even more potential for the healthcare sector. Introduction of Business Process automation can streamline the healthcare system for simplicity, increase service quality, and manage pen and paper-based manual tasks, saving time and cost without the slightest glitch.

Challenges in Healthcare

Application Disparity: Modern healthcare is a complex environment where many disparate applications work. The complexities also grow with the business. With mergers and acquisitions, modern technologies are adopted to meet new challenges. In this situation managing all technology becomes quite difficult for the healthcare IT departments and business users.

For example, in most healthcare sectors, patient data transfer is still carried out manually in the form of files and documents from one department to another. As the volumes of data are generally high, compiling them in one single process is a tedious and lengthy process. Not to forget, since they are done manually, there is always scope for multiple human errors and miscalculations. The greater the rate of error, the more the cost will be for rectification.

Lack of efficiency in patient service is another challenge that can be a barrier to your organization’s growth and reputation. In most scenarios, customer care executives are busy with paper works and other files and documents compilation that they lack prompt and timely delivery of services to the customers.

I hope you can relate to the problems I discussed above. So now I am going to share how automation becomes an integral part to solve some of healthcare challenges.

The business automation software works on all programs whether, new applications, legacy programs, cloud-based solutions, or on-prem.

BPA makes human-oriented processes much smoother with greater results like increased speed, productivity, and efficiency. Suppose for a patient, scheduling an appointment with a doctor usually happens online in a matter of minutes, but what happens behind the scenes is a bit more complicated. Healthcare institutions have to collect personal information, diagnosis, insurance details, and confirm the doctor’s availability. If the right information cannot be accessed during the registration process, or if the doctor is not available, it is up to the staff to inform the patient beforehand.

Implementing automation can resolve some of the bottlenecks related to scheduling patient appointments. Bots can automate patient data collection and processing, so patients can be optimally scheduled according to diagnosis, location, doctor availability, and other criteria. By simplifying appointment scheduling, healthcare institutions can focus their efforts on providing exceptional service and other tasks that cannot be automated. Automation tools also enable customer care executives to take the assistance of such tools with automated call and email options.

Inovar proposed Automation Solutions with better ROI

Something similar also happened with one of our US healthcare clients. They were also facing customer support challenges during the pandemic. They were primarily using a pen and paper-based system for information collection and symptom analysis. After the outbreak of the virus, a lot of patients started asking for support in their chatbot regarding diagnosis, vaccination, and other medical support.

With a lot of restrictions, it becomes necessary to give patients the capability to run the assessments on their own from the comfort of their homes. Remote work capability also became especially important, and they needed an infrastructure through which they can achieve that.

To manage the entire scenario, they required VPN, which was not designed for such large-scale use resulting in low bandwidth and frequent outages. Additionally, a lot of the content and manual workflows required the physical presence of the employees on the hospital premises that was quite impossible at that moment.

In this critical situation, the first hurdle to cross was to get them to a cloud-based solution to ride care facilities across all their nation-level operations and provide the best health support to patients. This would make remote work possible for the hospital staff in case they were showing symptoms and had to quarantine at home.

In this situation, Inovar helped a leading US healthcare organization to automate their Covid-19 Helpdesk and Registration process from a pen and paper-based manual system to a cloud-based automated solution.

SharePoint Online became the choice of the platform along with Power Platform as their primary productivity technologies allowed them to create a technology framework with a built-in rapid growth mechanism and assist hospital staff to manage the entire remote work process.

With the implementation of the initial solution, the healthcare organization was also facing challenges to roll out the solution in all other locations of the US that becomes much fair with SharePoint and the power platform.

A simple but practical experience gained a high adoption rate within the healthcare organization. The organization’s IT team also considered it as the best rollout experience with almost a zero-touch provisioning and deployment strategy. Ultimately, with all these solutions 90% of their remote and quarantined caregivers were able to serve the patients over remote calls and Online consultation.

The digitization of call centers was a major bonus as it consisted of Automated Diagnosis for Covid, automatic decision-making capabilities, and other similar tools that ensured self-help services that were non-existent before. This resulted in a reduction of 70% in phone calls.

Businesses transitioning from manual processes to comprehensive workload automation tend to go through several phases on their automation journey. If you are also facing the same difficulties within your organization, then Inovar is the best partner to collaborate with and make your automation journey smoother. Feel free to reach out to us we would love to collaborate!

With the fast pace of cloud changes, cloud lock-in remains a popular refrain. But do you know what does it mean? How can you make sure that you’re boosting your cloud investment at the same time maintaining portability in mind?

In this article, we are going to talk about how business owners and analysts will strategize the best cloud approach to maximize their ROI and gain ten times better solutions with the proper cloud architecture.

Cloud bursting! Hybrid cloud! On & Off-premises! Multi-cloud! Cloud strategists, analysts, and architects are aware of these phrases over the past ten years. Each of them makes logical sense, but in recent times, it’s the last – multi-cloud – that I’ve seen in actual practice the most.

If you are not clear about what multi-cloud is — let me explain!

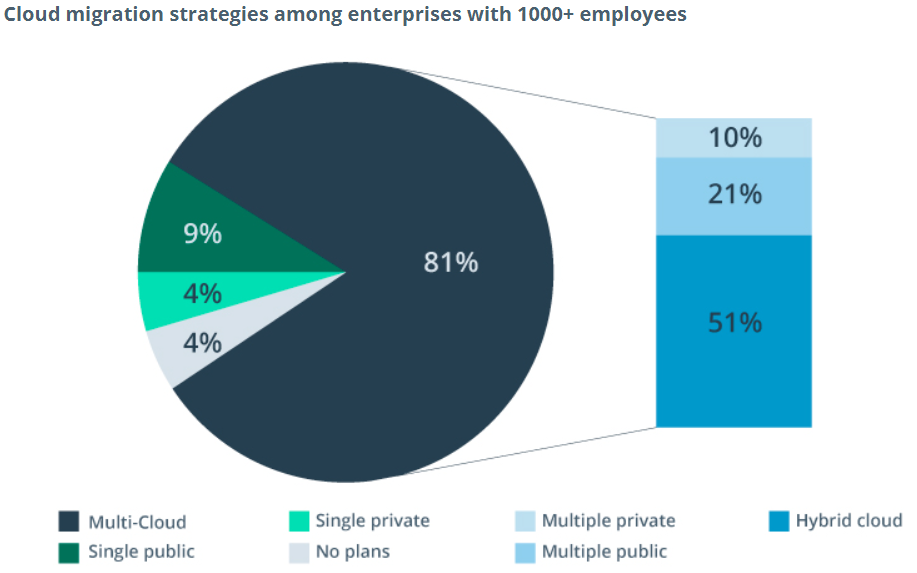

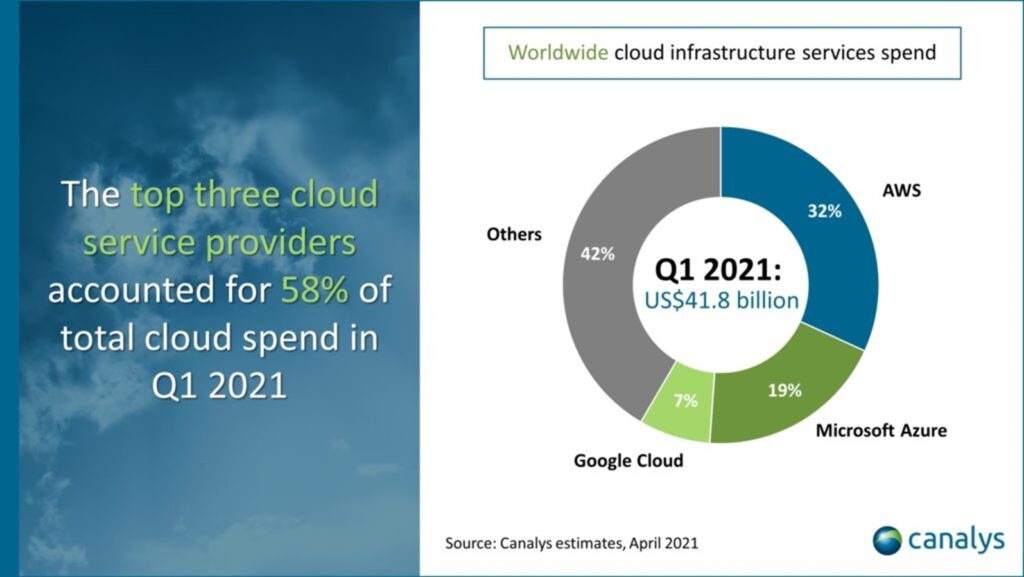

What is multi-cloud?

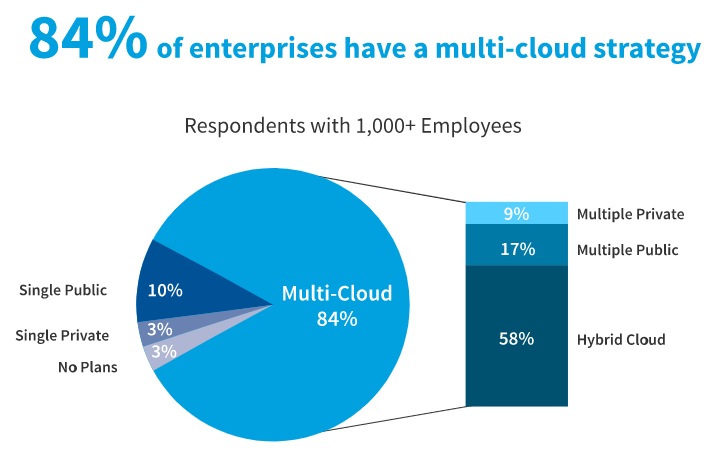

Multi-cloud is the utilization of two or more public and/or private cloud providers to assist the IT services and infrastructure of an organization. A multi-cloud strategy typically consists of a mix of primary public cloud providers particularly, Amazon Web Services (AWS), Google Cloud Platform (GCP), Microsoft (Azure), IBM, and Alibaba.

Businesses that embrace a multi-cloud architecture may leverage various public clouds in combination with private cloud deployments and traditional on-premises infrastructure.

Organizations prefer the best services from each cloud provider based on values, technical specifications, geographic availability, and other factors. Each day, every organization practices multiple types of data and diverse applications. Most of the cloud vendors specialize in a particular area, so being able to use different clouds encourages companies with the agility they need. This may mean that a company uses Google Cloud for development/test while using AWS for disaster restoration and Microsoft Azure to concoct business analytics data.

What is a multi-cloud strategy?

A multi-cloud environment can combine SaaS, PaaS, and IaaS deployments from more than one public or private cloud service provider to meet an organization’s technical and business needs.

For example, many businesses initially jump into the cloud tepidly, at least one small service or application at a time. Shortly, this converts unwieldy, and you have to clean up your cloud sprawl. Hence, you need a strategy.

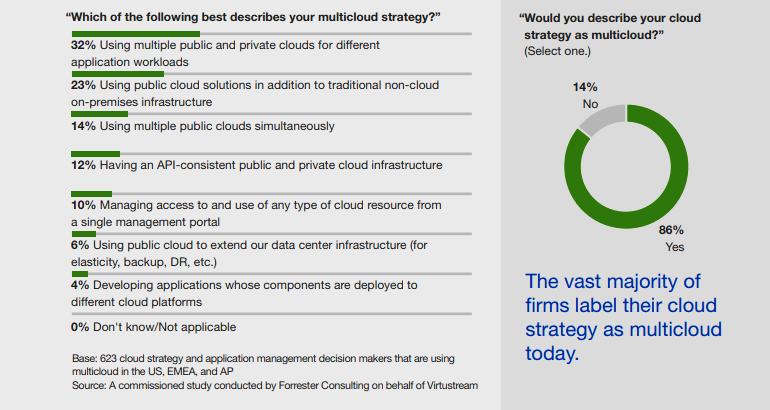

A multi-cloud approach empowers organizations to advance the efficiency of their IT spending and business operations by preferring an appropriate service and provider for each use case. The main concern of every organization is to reflect their needs are and align their needs with the best cloud providers applying a multi-cloud strategy. The forester industry analysts conducted an in-depth analysis of over 600 cloud strategy professionals to determine the leading use cases for multi-cloud architecture.

Below we have discussed the modern use cases for a greater understanding—

- Selectively deploying application workloads over multiple public and private clouds depends on the application and business needs.

- Combining on-premises infrastructure and services from multiple public clouds in a hybrid cloud environment.

- Evolving API-consistent cloud architecture across both public and private clouds.

- Developing data-center abilities and extending disaster recovery capabilities.

- Governing access to cloud-based data, applications, and services within a specific control portal.

- Advancing applications with components deployed to various cloud platforms.

You can have highly available apps existing in one ideal cloud solution and actually sensitive data that you don’t need to access often in a different cloud solution applying the best multi-cloud approach. This one might be slower but more secure—and that’s OK!

The continuous IT investment will optimize multi-cloud architecture and deployments to recognize actual strategic advantages and aspirations. Are you interested in a closer look at seven of the most influential drivers for multi-cloud investment?

Reasons Organizations Choose a Multi-Cloud Strategy

Recent Forrester research found that almost 80% of enterprises describe their strategy as a hybrid/multi-cloud one. Multi-cloud is quickly establishing itself to be the prospect of business across every sector. To prevent vendor lock-in, drive down costs, and ensure agility, many organizations are now looking to multiple clouds for their operational needs.

According to the same research, only 42% of organizations regularly optimize their cloud spending, and only 41% maintain an approved service catalog. So, it is always important to remember that without a strong understanding of what is being used and where organizations risk circumventing the benefits of a multi-cloud strategy and settling themselves with high budgets and security risks.

Avoiding Vendor Lock-In

Vendor lock-in occurs when an organization faces too much complexity to transfer its business apart from one cloud service provider to another provider or even bring its data back on-premises.

Organizations that rely on a single cloud service provider normally develop applications that depend profoundly on the unique potentials of that vendor. As those organizations broaden their investment in that single cloud, switching providers becomes more expensive, complex, also takes an enormous time.

In contrast, organizations that already have devoted themselves to a multi-cloud strategy purposely plan for agility and flexibility between multiple cloud providers. With the adaptability to move applications between multiple public cloud vendors, organizations are poised to take recognition of new technologies from all providers and adopt the well-functioning or most cost-effective services for specific application workloads.

Choosing a multi-cloud strategy can help your business avoid vendor lock-in, take support of new and better technologies from other providers, and adopt the most cost-effective and performance-enhanced compute or storage resources for each workload.

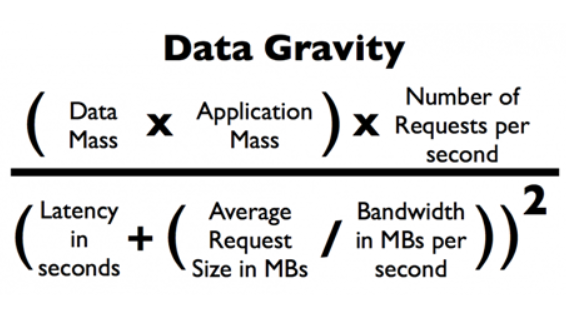

Overcoming Data Gravity

Data performs a significant role in daily operations for thousands of businesses. Organizations have traditionally kept their data in on-premises data centers where it could be spelled out by legacy applications, but in the cloud computing era, more are preferring to regulate data in the cloud and analyze them with cloud-based applications.

The term “Data Gravity” refers to the concept that large data sets are troublesome and expensive to move or migrate. If your organization keeps a large volume of data with a single cloud service provider, data gravity could push you to deploy related apps and services with the same provider—even when there are more cost-effective options accessible in another cloud.

Overcoming data gravity is as manageable as utilizing a cloud-attached storage solution that combines multiple clouds simultaneously. The best solutions minimize latency by hosting your data close to cloud data centers.

Better workload optimization

Whenever you approach any public cloud service provider, they offer their blend of physical infrastructure components and application services with versatile functionality, usage characteristics, terms & conditions, and pricing. They also release new features regularly to make their services more efficient, cost-effective, and attractive to customers.

Henceforth single cloud provider can’t claim to provide cost-optimized services that cover every potential business need or use case. But when you switch to a multi-cloud strategy, you can choose the most suitable cloud service provider for each application or workload, leading to enhanced application performance and improved cost-efficiency.

Elevating application performance

When cloud-based application services are released from servers at separate locations, data must travel across several network nodes before entering the user. In this pathway, slow data transfers may degrade application performance and negatively affect the user experience because of high network latency.

The market leaders of public cloud service providers (AWS, Azure, & Google Cloud) operate multiple data stations in geographically different regions, establishing a network of availability hotspots that deliver high-speed service to worldwide customers and users. By affirming a multi-cloud strategy and leveraging cloud services from the above one vendor, organizations can enter new geographies and implement better application and data performance for their users, wherever they are located.

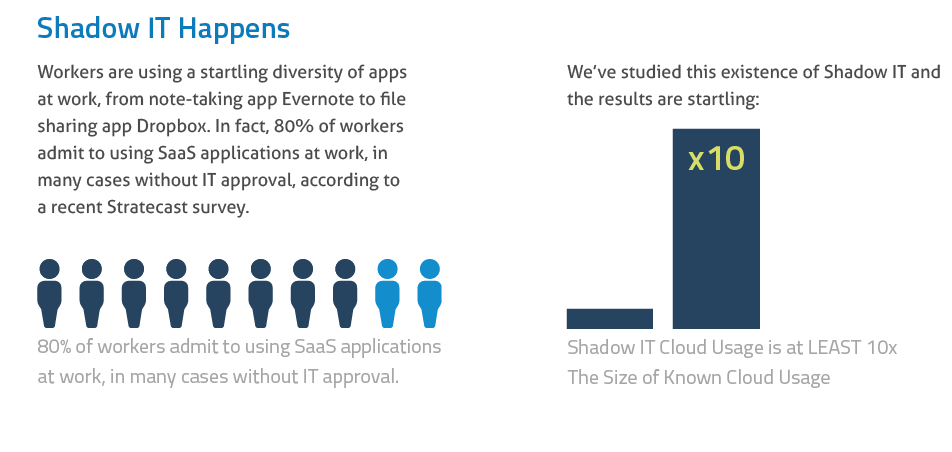

Curbing Shadow IT

When autonomous business units within an organization select any technological solutions without the supervision of the IT department, shadow IT happens. Shadow IT leads to security concerns, notably whenever staff members use unsecured platforms outside of the organizational firewall to transact sensitive data. Based on the Gartner prediction in 2020, 30% or more successful cyberattacks will target Shadow IT resources within enterprise organizations.

Organizations that are now adopting a multi-cloud approach can speed up their adoption of cloud services that drive employee productivity and collaboration, reducing the inducement for employees to execute new technologies without going through the channels.

Enhance disaster recovery capabilities!

A good number of public cloud service providers offer 99.5% uptime as part of their service level agreements — yet unplanned brownouts happen, and they can be extremely costly. According to a 2019 IT survey, it has been observed that organizations experienced an average of 830 minutes of unplanned downtime during the year, with an average cost of $5.6 million.

Organizations can respond to unplanned service outages by failing over their workloads from one public cloud to another with the help of a multi-cloud strategy. We provide our clients a customized solution on their failover models based upon application-specific needs, taking advantage of trade-offs between cost and performance to achieve a fully optimized disaster recovery strategy.

Meeting regulatory compliance and requirements

More organizations than ever now desire to meet regulatory compliance provisions referred to as data localization or data residency. Data localization laws may prohibit organizations from exporting data about a nation’s residents to other countries, requiring that the data be processed and locked away in the same region where it was collected.

Organizations can observe data localization or residency laws through a multi-cloud strategy by taking the assistant of cloud service providers with regional accessibility zones and data storage infrastructure.

Why should you choose Inovar to run your strategy?

Multi-cloud strategies are inherently complex. Legacy systems, disparate data sources, and different applications may start creating a unified plan for a momentous task. That’s why, to have multi-cloud perfect, more and more companies are searching for partners to help. The bespoke partners known as managed services providers help organizations embrace the cloud with speed, accuracy, and security.

There are many service providers out there. How do you understand which one is most competent for your organization?

According to the latest Forrester Wave: Multi-cloud Managed Services Providers report, enterprises should consider managed services providers that meet three criteria:

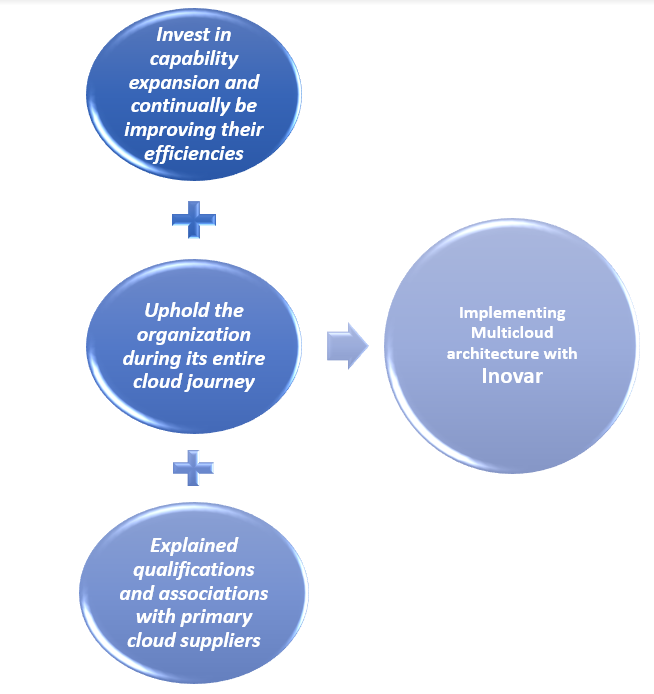

First, the provider should be able to uphold the organization during its entire cloud journey, from initial planning to migration, execution, and monitoring. At Inovar, we provide end-to-end services. We always try to know our pain points, requirements and suggest to you the best-suited solution. We offer you the supervision that you need to select the right capabilities to accomplish your business objectives- from cloud services to deployment, operation, and management.

Secondly, providers must have the strength to invest in capability expansion and continually be improving their efficiencies. Cloud is one of the broadest platforms where technology is emerging constantly. Considering this fact, we always keep an eye on what is coming and suggest the best design strategy for resilience and success for years to come.

We maintain an atmosphere where you can promptly innovate, develop, obtain the ideal data at the right time, transfer workloads around as required, and draw full advantage of the latest progressions in AI, automation, analytics, and more so you can constantly iterate and enhance everything from applications to business processes. Starting from setting up your strategy to apply the best-automated approach, we try to ensure real-time optimization and help you build the skill sets you need to become more cloud-native.

Third, providers must have explained qualifications and associations with primary cloud suppliers. Our vendor-agnostic strategy lets you connect with the best services from well-known providers: Inovar multi-cloud platform is accredited by partners including Amazon Web Services (AWS) and Microsoft Azure for superb delivery and compliance to industry standards. Here you can find multiple options on the combination of vendors and get what you need.

Since most organizations are in business to generate money, we can safely say a multi-cloud strategy contributes to cash flow by taking care of money. Usually, challenges and solutions become more complicated when people address them sound more complex. But having the most suitable plan and the best people will ensure that your strategy lives for a very long time. If you are also looking for the same please reach out to us, we would love to collaborate.

Change management is probably one of the critical parts of the business, but nowadays, it plays the role of champion for every workplace by establishing an end goal, improving profitability, and decreasing risk factors. Experts also explain change management as one of the ITIL processes responsible for controlling the life cycle of IT Infrastructure. According to Gartner, 95% of organizations successfully achieve change management objectives with an effective change management strategy.

The main objective of this process is to enable changes to be made while ensuring a minimum disruption in IT services. While delivering IT services, support teams follow the ITIL change management process to manage changes. But nowadays, the process has changed with the advent of Cloud services.

Now the question is how one organization should manage the change management process in the cloud–

Now cloud technologies are firmly established as the norm of the organization. Not only that but also organizations are tying digital transformation strategies to explore a more cloud-first approach. Organizations become more focused on the cloud-first approach not because of cost savings but speed up the delivery of new product and services, in line with the ever-changing customer needs and market dynamics.

An organization needs to adopt fresh thinking and take a break from the traditional norms of Change management for managing these changes. Moving to the cloud does not mean we are immune from the incidents that stem from changes. This means practices that support cloud changes we should apply appropriately to enjoy the cloud benefits.

Let’s take a look at considerations of managing change in the cloud.

1. Cloud-first environment facilitate the fast change process

Agility is the first term when you think about Cloud environments that facilitating speed when it comes to change. You can spin up an environment with the requisite capacity and software you need for your solution without any delay. This means that changes can be performed using a few clicks and operations, taking minutes rather than days.

It required adequate planning for capacity upgrades for the IT-savvy environment. It is not true of cloud environments, where auto-scaling ensures capacity can be upgraded automatically, on-demand.

Organizations can use a variety of automation, integration, and deployment tools in Cloud environments that allow the organization to make small, frequent changes. It results in low business risk and introduces business value at an increased rate. These include solutions such as:

- Git

- Jenkins

- Chef

- Puppet

To understand and prepare infrastructure for required changes, the cloud-native approach reduces the necessary change management effort. Also, the automation featuring cloud change and high velocity means routing change through a centralized repository—for logging all changes for categorization, prioritization, and approval – it takes a back seat due to the constraint it could turn on.

2. Apply best tools and strategy to control change management in the cloud:

Tools and automation are not enough for performing change management in the cloud. Without any control, businesses can face several challenges like validation of change and approval process which reduces the ability of enterprises to scale Cloud computing services quickly based on business demand. Apart from that, identifying the right balance for standard approvals and managing the extra expenses of cloud-based subscriptions become a burden for many organizations.

Before implementing any changes always, ask yourself: “What could go wrong? What will the impact be on related systems?” This step reinforces caution and may even cause you to rethink the change and attempt another safer avenue if the risk level is too high. This is not the end; you must set few standards for verification of success and formulate a backout plan to reverse the change(s) if something goes awry. Change management leaders can also test the overall change process in a structured environment starting from development to production system. The whole environment comprises a set of layers so that adverse change effects can be recognized and resolved before they go prime time.

Here are some of the best change management practices that a Change Management Lead can carry out during the transition and control the overall process-

- Align change management with SDLC – We can customize The SDLC process in the cloud environment depending on release time and the number of tasks. The change activities need to be aligned rightly and integrated with the SDLC stages to ensure a successful transition.

- Detect company drivers for cloud computing – The change management lead can review the company’s business case comprising drivers for moving to the cloud platform. It helps to define value, assessment, and even focus change management activities on vital performance indicators.

- A clear understanding of the new governance structure – A change management lead is responsible to ensure that the IT organization is on the same page regarding the new governance structure and maintain a continuation within the whole continually follow the new processes without creating any shadow practices.

- Structure correct expectations with the cloud user community – A change management lead needs to structure and manage correct expectations with the cloud user community to sidestep any later dissatisfaction due to unmet expectations.

3. New solution development through enablement approach:

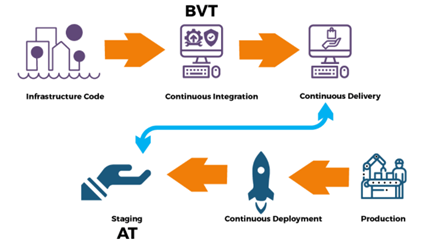

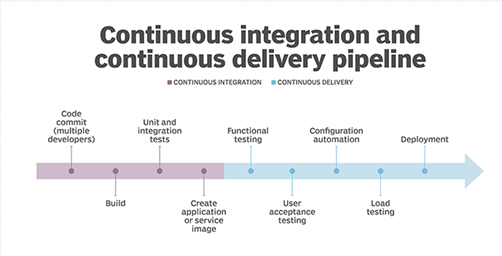

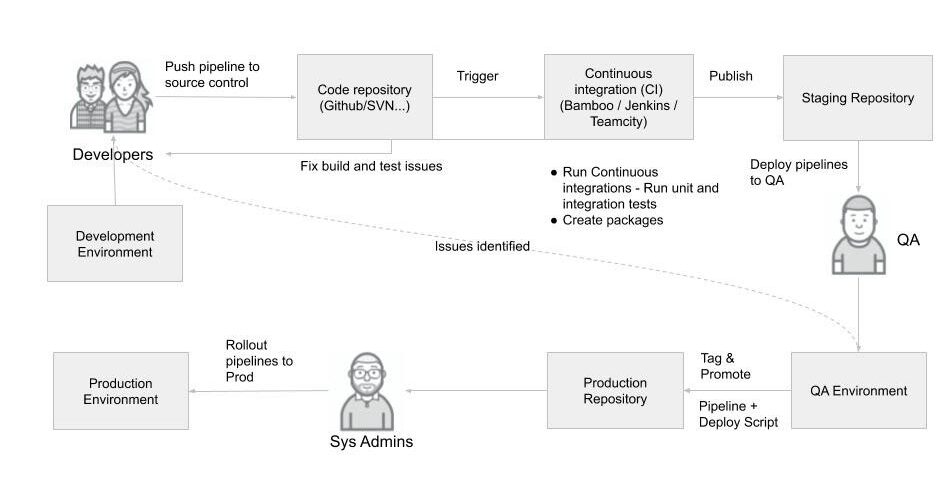

Agile and DevOps are now the mainstream of solution development in the cloud where change management needs to move from a control perspective to one of enablement. These new approaches are self-managed in nature. For example, they repel any attempts to impose bureaucratic control—a hallmark of change management. Bespoke approaches rely on the iterative and regular deployment of new features and modifications, which can be delivered through continuous integration, delivery, and deployment processes.

Cloud technologies can reduce much complexity in the planning and execution of changes due to automation and visibility provided by these technologies.

Adoption of the cloud means that change management should now be geared towards exploiting these capabilities, therefore focusing on the development of appropriate change models. Cloud-focused change models would consider:

- Technical aspects

- Compliance requirements (data privacy and information security controls)

- Product ownership

- Additional concerns

These models facilitate faster planning, approval, and automated execution of changes within the cloud environment. Change management also dependable on the risk factor of these models. Like-

- Low and medium risk models would be fully automated, including appropriate checks.

- Whereas high-risk/cost changes would be routed automatically to the relevant authority for review and approval.

4. Change authorities need to adjust

There is a couple of change authority which dependable on the type of changes. For example, if your change significant to high cost and risk, you need to go all the way to the board for approval, while a low-level change might just require the data center manager.

Change Advisory Board (CAB), a well-known authority in IT changes, became the de facto target for bureaucracy in change management where their decision-making process was not organized to support speed in delivery of business value through change.

Product and infrastructure teams must have greater autonomy in prioritizing what changes should be executed to facilitate speed in a cloud environment. The exercise of control is only applicable when the risk and expense go beyond the set threshold. If the team is within the limit and based on agreed models, freedom to make changes will be granted. Sometimes organizations prefer the authority of internal peer reviews on decision making for changes to code or environments rather than having a centralized external CAB. The giant MNCs like Google also prioritize the internal self-managed capability instead of reliance on external authority.

So, in a cloud environment, the visibility of change becomes the focus for higher-level change authorities, who are outside the product or infrastructure teams. Here are the few suggestions to authorize change management by tracking cloud monitoring dashboards:

- What changed

- Whether compliance requirements are met

- Metrics, especially those indicative of the velocity of changes such as the percentage of fully automated changes

Whenever a change causes an influential incident, the higher-level change authority comes to the central play. In this situation, automated change workflows help in root cause analysis and suggest appropriate corrective action as per the result. This macro-level change authority will also coordinate and integrate the teams who manage cloud environments, and the product development teams.

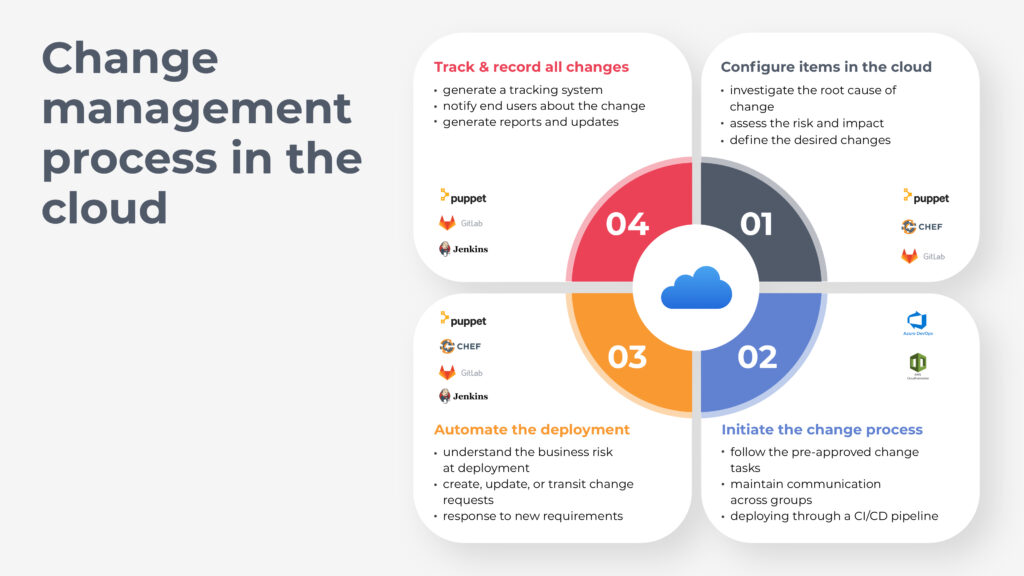

Change management process in the cloud:

Change management can be more complex in the cloud than on-premises but is not a lost cause. An organization can institute appropriate planning for the change management process to automate their services and incorporate iterative deployment models with the technologies like infrastructure as code. Apart from the technical aspects, organizations need to think about the coordination and communication of their staff to ensure everyone is on the same page. 38%* of employees who have experienced workplace transformation say that their employer communicated effectively about the changes. Some key steps will modify the existing change process with new methodologies.

a. Configure items in the cloud:

In a traditional IT environment where application updates and operating system patches are installed or deployed on a server, if an application suffers a fault, an engineer may be tasked to investigate the root cause, apply a fix, or deploy a new server. In the AWS cloud, one can use Auto Scaling groups to automate the whole process. It detects the failures automatically using pre-defined health checks and automatically replaced servers with the same configuration.

The cloud-based tools can undertake configuration changes and track the management approval process. The tools can be adapted to approve or decline the additional configuration or subscription changes. Chef is a configuration management tool for information technology (IT) professionals, like you. Chef enables web IT easily by providing first-class support for managing cloud infrastructure.

Some organizations prefer the open-source system management tool Puppet, where the team can build up a blueprint of infrastructure that’s already there, define the desired changes and results and finally create the means to achieve this result.

On the other hand, using GitLab’s OCM methodology change management lead develops an agile change plan, assesses the risk and impact against every change. This configuration process helps change management leads to prepare a risk assessment plan and implement action in case of any emergency.

b. Initiate the change process: